What is back pressure in spark Streaming?

Ava White

Ava White

In summary, enabling backpressure is an important technique to make your spark streaming application production ready. It dynamically set the message ingestion rate based on previous batch performance, thus making your spark streaming application stable and efficient, without the pitfall of statically capped max rate.

What is back pressure in Spark?

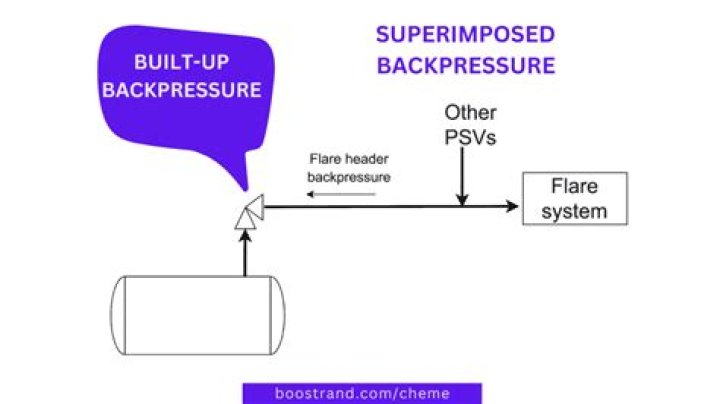

Backpressure refers to the situation where a system is receiving data at a higher rate than it can process during a temporary load spike. If there is a sudden spike in traffic, this could cause bottlenecks in downstream dependencies, that slows down the stream processing.What is a DStream?

Discretized Stream or DStream is the basic abstraction provided by Spark Streaming. It represents a continuous stream of data, either the input data stream received from source, or the processed data stream generated by transforming the input stream.What is checkpointing in Spark Streaming?

What is Spark Streaming Checkpoint. A process of writing received records at checkpoint intervals to HDFS is checkpointing. It is a requirement that streaming application must operate 24/7. Hence, must be resilient to failures unrelated to the application logic such as system failures, JVM crashes, etc.Is Spark Streaming real-time?

Spark Streaming is an extension of the core Spark API that allows data engineers and data scientists to process real-time data from various sources including (but not limited to) Kafka, Flume, and Amazon Kinesis. This processed data can be pushed out to file systems, databases, and live dashboards.Understanding Akka Streams, Back Pressure and Asynchronous Architecture

What is the difference between Spark and Spark Streaming?

Generally, Spark streaming is used for real time processing. But it is an older or rather you can say original, RDD based Spark structured streaming is the newer, highly optimized API for Spark. Users are advised to use the newer Spark structured streaming API for Spark.What is ETL in Spark?

ETL refers to the transfer and transformation of data from one system to another using data pipelines. Data is extracted from a source, or multiple sources, often to move it to a unified platform such as a data lake or a data warehouse to deliver analytics and business intelligence.What is checkpoint in Databricks?

Azure Databricks uses the checkpoint directory to ensure correct and consistent progress information. When a stream is shut down, either purposely or accidentally, the checkpoint directory allows Azure Databricks to restart and pick up exactly where it left off.What is batch interval in Spark Streaming?

A batch interval tells spark that for what duration you have to fetch the data, like if its 1 minute, it would fetch the data for the last 1 minute. source: spark.apache.org. So the data would start pouring in a stream in batches, this continuous stream of data is called DStream.What is sliding window in Spark?

Sliding Window controls transmission of data packets between various computer networks. Spark Streaming library provides windowed computations where the transformations on RDDs are applied over a sliding window of data.What is DStream and RDD?

A Discretized Stream (DStream), the basic abstraction in Spark Streaming, is a continuous sequence of RDDs (of the same type) representing a continuous stream of data (see spark. RDD for more details on RDDs).How do I improve my Spark application performance?

Apache Spark Performance Boosting

- 1 — Join by broadcast. ...

- 2 — Replace Joins & Aggregations with Windows. ...

- 3 — Minimize Shuffles. ...

- 4 — Cache Properly. ...

- 5 — Break the Lineage — Checkpointing. ...

- 6 — Avoid using UDFs. ...

- 7 — Tackle with Skew Data — salting & repartition. ...

- 8 — Utilize Proper File Formats — Parquet.

What is StreamingContext in Spark?

public class StreamingContext extends Object implements Logging. Main entry point for Spark Streaming functionality. It provides methods used to create DStream s from various input sources. It can be either created by providing a Spark master URL and an appName, or from a org. apache.What causes back pressure?

A common example of backpressure is that caused by the exhaust system (consisting of the exhaust manifold, catalytic converter, muffler and connecting pipes) of an automotive four-stroke engine, which has a negative effect on engine efficiency, resulting in a decrease of power output that must be compensated by ...What is backpressure in Kafka?

Backpressure in Kafka ConsumersThis pull-based mechanism of consuming allows the consumer to stop requesting new records when the application or downstream components are overwhelmed with load.